Hello dear community, it’s Bernhard here again! Not the one with the jackhammer, but the one with the soldering iron. Igor really knows how to inspire with great projects and ideas and so I was recruited once again to turn a small project into reality for him. What is it going to be about? Igor wants to measure the real (human-perceived) latency of peripheral devices with as little delay as possible and in a non-invasive way. NVIDIA’s LDAT is EOL and if you want to be future-proof, you have to free yourself from such dependencies as quickly as possible. In concrete terms, this means that the prototype of the measurement equipment must detect when a button is pressed and how long it takes for something to change on the screen. The simplest option here would be to simply solder measuring leads to the buttons on mice and keyboards, but who can and wants to disassemble, solder, measure and then reassemble several peripheral devices in productive editorial operations? Nobody!

In an earlier article on the basics, we already took a very close look at NVIDIA’s LDAT – a tool that measures the time between a mouse click and a visible reaction on the screen. LDAT was long regarded as the reference solution because it was able to deliver reproducible results and was platform-independent. At first glance, the methodology – a click signal coupled with a light sensor on the monitor – was as simple as it was effective and showed how important it is to measure click-to-display latency in a reproducible way.

However, this approach also revealed its weaknesses. LDAT only works indirectly, is very sensitive to external influences and makes it difficult to compare different setups. Above all, however, NVIDIA has since discontinued the tool, meaning that it is neither further developed nor available. This creates a dangerous dependency for anyone who wants to take latency measurements seriously in the long term – and that’s exactly what we wanted to break.

For this reason, we decided to develop our own alternative. Our aim was to adopt the strengths of LDAT, but at the same time achieve greater flexibility, independence and practicality. The result is a completely non-invasive system that combines modern sensor technology with fast processing and provides the measured values directly in an analyzable form. This not only makes us independent of a proprietary solution, but also creates a future-proof basis for reproducible measurements in everyday editorial work.

Where we take targeted action

-

Completely non-invasive: no need to re-solder the mouse or keyboard, no contact pins – you use acoustic or optical sensors to record clicks and screen reactions.

-

Modular sensor technology: MEMS microphone for sound events, ultra-fast phototransistor for screen light and a high-performance ARM controller ensure short response times and flexible customization options.

-

Built-in calibration: Potentiometer for noise suppression, calibration of light and sound detection, and the ability to adapt to different monitors and peripherals without manual adjustment.

-

Genuine editorial suitability: The data lands directly on the OLED display, is output via COM port and also saved as a CSV – no time-consuming post-processing required, and the prototype is ready for immediate use without special hardware.

-

Future-proof and open: The system can also be further developed and operated permanently without dependence on proprietary special hardware – regardless of whether NVIDIA or another provider discontinues or changes a tool system.

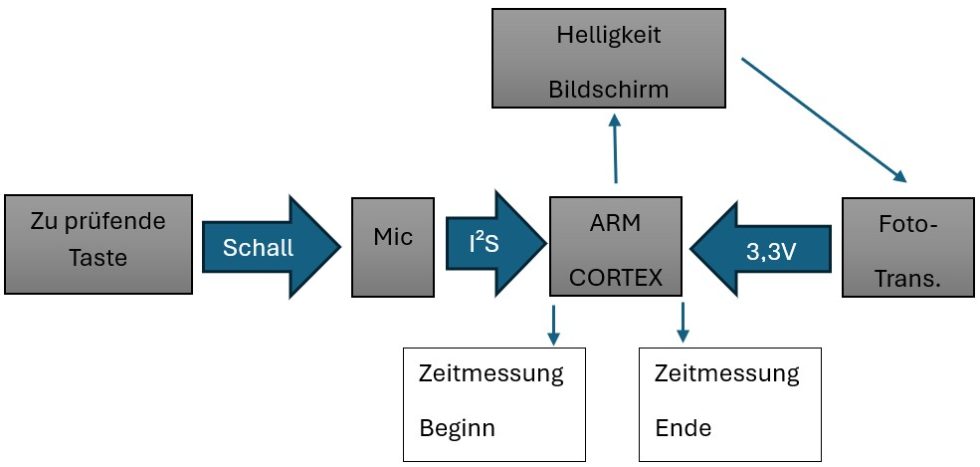

The concept at a glance and in block form

Accessing the measured values without integrating anything into the hardware is not that easy. That’s why the idea is to acoustically record the mouse click or keystroke and then process it further. Another idea would be to work with a laser. But we use a digital MEMS microphone to record the trigger sound. The processing component is an ARM Cortex M7, which offers us low latency and fast processing with its 600 MHz clock rate. To do this, we need desktop software that switches from light to dark as soon as sound is detected.

The microcontroller then measures and calculates the time it took from the sound event to the color change on the screen: Sound event to color change on the monitor. Before this, however, the average value of the internal measurement must be calibrated, just like the black and white values for the phototransistor. This is important for reproducible results and has the advantage that we can adjust to a wide variety of screens. Due to the sensitive nature of MEMS microphones, they also need settings to filter out background noise, a kind of noise gate.

In our case, this is set using a potentiometer. Two LEDs, red and green, tell us whether the microphone has triggered or the calibration button has been pressed. After the measurement, the values should be directly evaluable for Igor, so we show them on an OLED display, send them as a message via a COM port and save them as a CSV table on a micro SD card.

https://www.igorslab.de/kabellose-vs-kabelgebundene-maeuse-latenzunterschiede-messen-nvidia-ldat-v2-im-praxistest/

30 Antworten

Kommentar

Lade neue Kommentare

Veteran

Mitglied

Urgestein

1

Mitglied

Urgestein

Mitglied

Urgestein

Veteran

1

Urgestein

Mitglied

Mitglied

Mitglied

Urgestein

Urgestein

Mitglied

1

Mitglied

Alle Kommentare lesen unter igor´sLAB Community →