When you hold a Radeon card in your hand today, you are looking at the product of a company that is officially called AMD, but has an engineering culture that has grown over three decades and clearly originates from Markham, Ontario. It all began there in 1985 with three founders who set up their own company out of pragmatism rather than a grand Silicon Valley vision. “Array Technologies Inc.” later became ATI, and hardly anyone in the team had any idea at the time that one of the most influential GPU schools in the world would emerge from these unassuming offices. The early stories from Markham sound almost iconic today: late-night prototype sessions, improvised labs, heated discussions about pipeline timings in the hallway and engineers such as Allan Cantle, Eric Demers and Dave Orton, who, with a mixture of inquiring minds and stubbornness, repeatedly pushed through things that were actually too risky on paper.

They optimized, experimented, discarded and started again, often literally overnight. A developer is said to have once joked that ATI was less a company than a “controlled technical chaos”. But it was precisely this chaos that was productive. It produced architectures that were ahead of their time, sometimes crude, but almost always bold. Many of the ideas that are visible in RDNA4 today have their intellectual roots in this era: pragmatic cache hierarchies, flexible shader pipelines, the courage to rethink things instead of just optimizing them.

But the story was never linear. The Rage generation laid the foundations, the Radeon 9700 Pro shook up the market, GCN dominated consoles, Polaris filled the gap, Vega dared to do the technically impossible, and RDNA became a conscious new beginning. ATI and later AMD experienced both highs and crashes. The legendary R300 era caused NVIDIA to falter, while Terascale and early GCN variants were often difficult to tame despite brilliant approaches. Polaris saved AMD massive amounts of time commercially, Vega showed technical ambitions that were gigantic on paper, and the Radeon VII seemed like a compute chip that accidentally ended up in gaming.

Markham remained the constant core through all these phases. Even after the acquisition by AMD in 2006, the teams there remained largely unchanged – same developers, same discussions, same long nights of work, just with bigger budgets and broader platform responsibilities. Anyone analyzing RDNA4 technically today will immediately recognize this signature: structured but never over-regulated, efficient but not dogmatic, and always built with the idea in mind that future generations will have to build on it.

The early architectures: From fixed function blocks to the first programmable pipelines

The early ATI architectures came from a time when the GPU landscape was more like a patchwork quilt than a definable technology field. Each company had its own philosophy, its own specialty blocks and, above all, its own ideas of what a graphics chip should actually do. ATI’s initial focus was less on maximum performance and more on a broad feature set for OEMs who needed stable 2D output, basic 3D capabilities and usable video acceleration. The Rage families reflected this pragmatic thinking: clearly separated functional blocks that all did their job, but were never as tightly integrated as later, modern pipelines.

Despite this conservative approach, ATI experimented surprisingly early with pipeline optimizations that were bold by the standards of the time. HyperZ, for example, an early form of Z-compression and Z-hierarchy, was not marketed as a major revolution, but had a significant impact on efficiency. The feature saved work steps before they even occurred and gave ATI cards an unexpected lightness in some scenes. The fact that HyperZ lived on almost unchanged in later Radeon generations speaks volumes: it was one of those inconspicuous but brilliant components of an architecture that never got the fame it deserved.

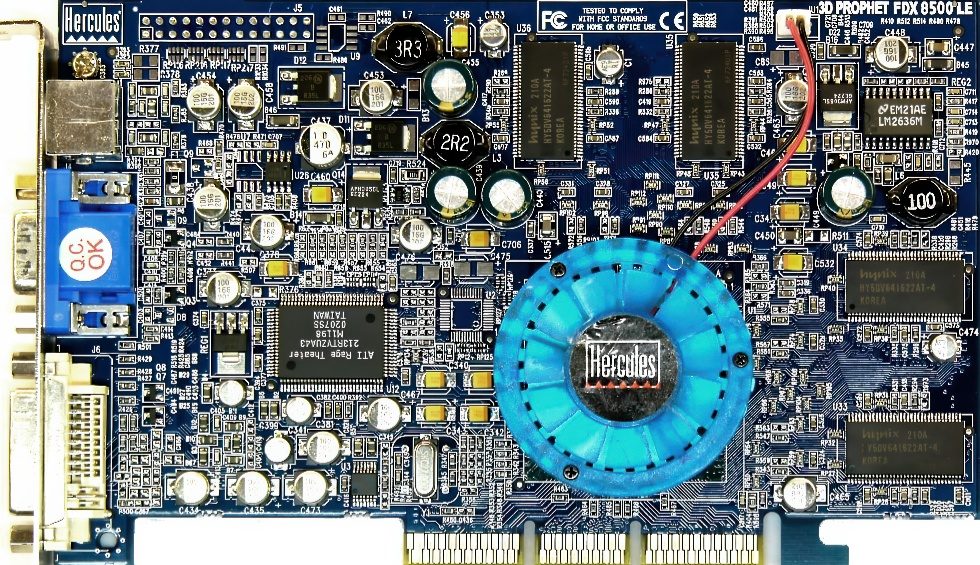

A real change of style followed with the Radeon 8500. While the Rage chips were still tied to classic function blocks, ATI began to work with programmable shaders here. The engineers did not claim to define the future of graphics back then. Many things were tried out because they were technically interesting and because smaller teams in Markham had the freedom to implement ideas that would have immediately failed elsewhere due to budget or bureaucracy. The VLIW-like structures that the 8500 worked with were complicated, but opened up a flexibility that few manufacturers knew how to use at the time.

It was the time when 3D programming really began to be understood. Shaders became longer, more complex and more individual. Games began to define their own effects instead of relying solely on fixed function pipelines. ATI did not try to make everything perfect, but rather to leave as many possibilities open as possible. This courage for programmable fuzziness later led directly to GCN and influenced RDNA, as many of the scheduler and parallelization-related challenges already appeared in the early VLIW experiments. This early phase may have seemed unstructured on the outside, but on the inside it laid the foundation for what would later become Radeon: an architecture made not just of fixed building blocks, but of ideas that could survive for generations. ATI learned early on that graphics is not static, but a moving target. And it was precisely this realization that shaped the company more than any individual chip.

R300 and R350: The architectures that shook NVIDIA

The introduction of R300 in 2002 was one of those rare moments when a GPU generation wasn’t just faster or more modern, but literally shook up the market and the competition. The Radeon 9700 Pro did not come as an evolutionary step, but as a brute technological leap on a par with a new era. Many engineers working on R300 in Markham at the time later recounted that even internal performance simulations were initially thought to be flawed because the gaps to NVIDIA’s chips at the time seemed too big to be real. But they were real.

Technically, R300 was a masterpiece in many ways. The architecture combined eight fully-fledged pixel pipelines with an exceptionally good memory organization at the time. The crossbar memory controller was way ahead of its time and ensured that bandwidth was not simply increased, but used more efficiently. The Early-Z logic, which discarded unnecessary pixel operations before they even cost shader time, was a massive efficiency gain. And the shaders themselves were remarkably flexible and highly parallelized by the standards of the time. Incidentally, many of the decisions that made R300 successful were less the result of long-term planning than the simple ambition of small ATI teams who wanted to prove themselves.

NVIDIA’s reaction at the time was correspondingly nervous. The GeForce4 was suddenly no longer competitive and the hastily developed successor NV30 (GeForce FX 5800) became a disaster that was referred to internally as the “Dustbuster episode” for a long time – an allusion to the noisy cooling system that was necessary to operate the chip stably at all. In development circles, it was said that ATI had “dismantled” NVIDIA’s entire roadmap, and for the first time in GPU history, NVIDIA actually had to run after ATI instead of the other way around.

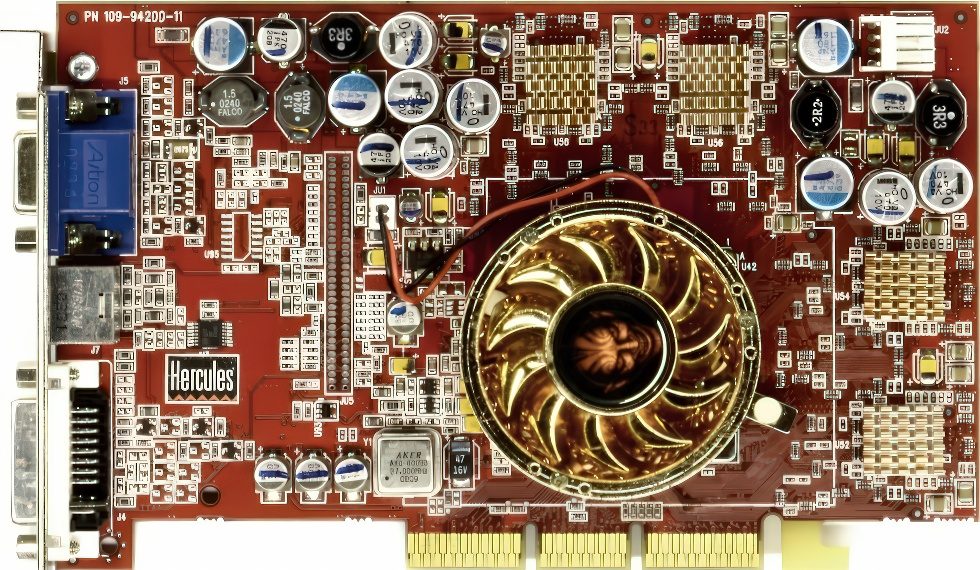

The successor R350, better known as the Radeon 9800 Pro, was less a new beginning than a perfection. The architecture was refined, shader handling was optimized and the memory controller was further trimmed for efficiency. The card was famous for its robustness, its surprisingly good thermal balance and – still a talking point in enthusiast circles today – its generous production yields. Some 9800-SE or 9800-non-Pro models included fully functional units that were only disabled for marketing or yield reasons. Back then, if you knew how to modify a BIOS or use the right tools, you could suddenly unlock almost the performance level of a top model from a mid-range card.

It was a time when graphics cards still offered real adventure, and many enthusiasts today remember with a smile how they discussed “pipeline unlocks”, shader masks or SoftMods in forums. In my own tests, the 9800 Pro was one of the most stable cards I’ve ever had in the lab – unimpressed by long load phases, good-natured when overclocking and with an architecture that was simply logical and clean. You could tell it came from a time when technical elegance and efficiency were more important than tight time-to-market constraints.

This made R300 an architecture that truly shook NVIDIA. Not just performance-wise, but also psychologically. It forced NVIDIA to rethink its entire strategy and indirectly led to the NV40 and later G80 generations, which in turn dominated the market. In this sense, R300 was not just a successful GPU, but a trigger for an innovation cycle that accelerated the industry for years. The entire R300/R350 era was thus a prime example of what the ATI school of the time was capable of: clever memory hierarchies, elegant pipeline organization, solid thermal design, and a fair amount of courage. Elements of this can still be found in RDNA today – a testament to an architecture that has had a lasting effect for over two decades.

AMD takes over ATI and reorganizes the world

The acquisition of ATI by AMD in 2006 was a strategic turning point for both companies. AMD urgently needed a GPU division to position itself beyond classic CPUs, while ATI was technically strong but increasingly under pressure financially. For ATI, the merger did not mean the end of its own identity, but rather an expansion of its possibilities. The Graphics teams in Markham remained largely unchanged, including their work culture and development processes. Instead of a full merger, a loosely coupled technical partnership was formed, with ATI contributing its GPU expertise and AMD providing additional access to capital, research and platform integration.

Technologically, the framework changed significantly. ATI now became part of a larger ecosystem that included CPU architecture, memory systems and software development. This resulted in the first concepts that were only possible through the close integration of both worlds, such as early APU designs, the later HSA vision or the systemic cache philosophy, which later gave rise to the Infinity Cache. At the same time, ATI had to learn to no longer think of GPUs in isolation, but as a building block of a comprehensive computing platform.

Despite all the structural changes, Markham remained the center of GPU development. Many architects who had previously worked on R300 or R420 later went on to develop GCN and RDNA. The technical signature – pragmatic, long-term scalable designs – was retained. The takeover rearranged the balance of power, but did not lead to a cultural break. Rather, a joint development path emerged that is visible today in RDNA4: an architecture that combines decades of ATI GPU experience with AMD’s systemic view of computing architecture. Markham remained the central development location. This was a conscious decision, as the architecture teams there had a unique collective expertise. To this day, Toronto is the brain of the Radeon architecture.

Terascale and the unified shader incision

Terascale was technically one of the biggest breakthroughs in ATI’s GPU history because it introduced a fully unified shader architecture for the first time. Instead of separate pixel and vertex blocks, ATI used VLIW5 shaders to process multiple instructions simultaneously. On paper this was enormously efficient, but in practice it was extremely dependent on the compiler. Games that understood the architecture ran excellently, others barely achieved a fraction of the theoretical performance.

With this transition, the classic 2D units also disappeared. This is exactly where my workstation tests at the time began, which initially aroused great expectations. Terascale showed impressive reserves for cleanly parallelizable 3D workloads and seemed like a step into the future. But as soon as 2D operations or mixed workloads came into play, the architecture showed its sensitive side. Window movements, GUI interactions or professional applications that combined 2D and 3D elements suddenly lost their responsiveness. The scheduler did not fill VLIW packages optimally, shaders waited for suitable instructions, and the GUI competed with shader loads that were actually intended for 3D.

The switch to VLIW4 in later Terascale generations alleviated some of these problems, but did not solve the basic dilemma: the architecture was elegant, but difficult to exploit and unpredictable in everyday use. It was precisely this mixture of progress and frustration that characterized my tests at the time. Terascale offered moments of genuine euphoria when the workloads were right, and moments of sobering head-shaking when even the simplest desktop operations seemed unexpectedly slow. Looking back, Terascale was a necessary interim phase. GCN, RDNA and today RDNA4 were also created because Terascale taught us how central scheduler design, instruction organization and workload prioritization are for the overall architecture.

GCN: A compute machine in a gaming shell

GCN was architecturally profound. Away from VLIW, towards scalar shader units optimized for compute. This made GCN extremely strong in scientific applications, mining, consoles and parallelized workloads. But in gaming, where workloads are dynamic, uneven and often difficult to parallelize, GCN showed weaknesses. Dispatch was sluggish, the scheduler was heavily biased towards concurrent workloads and the latency between instruction packets was higher than NVIDIA.

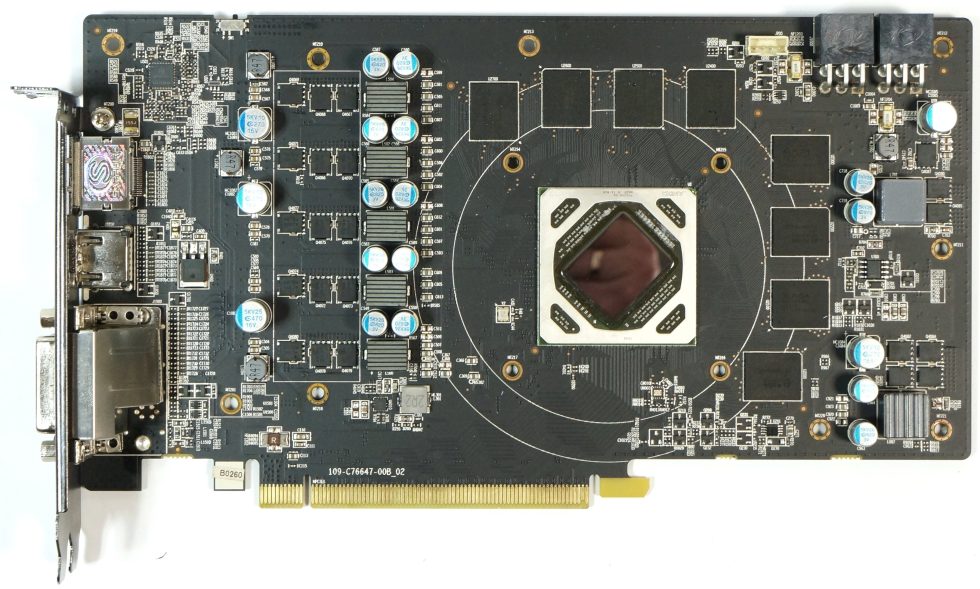

Polaris was one of those GPU generations that people look back on more fondly than when it was released. Technically, it was an evolution within GCN, but a very necessary one and one that gave AMD the headroom to work towards RDNA in peace. The architecture relied on 14nm FinFET, significantly improved memory compression, a more efficient geometry path and an overall more stable utilization of the compute units. The engineers in Markham had deliberately designed Polaris to be conservative but extremely robust, which was immediately noticeable in practice. The cards ran cool, constant and almost stoically reliable, even under continuous loads such as mining, where many a Polaris chip worked for two years without interruption and then continued to run without any problems in the gaming PC.

Anecdotally, I remember the planned launch date, which coincided with my trip to Asia. AMD had scheduled a major Polaris event at the Westin Hotel, only about a hundred meters away from my accommodation in Taipei. In theory, this would have been the most convenient date of my career. In practice, however, AMD had neglected to confirm the booking and the hotel was then booked elsewhere. So we went to Macau at short notice and somewhat chaotically, where AMD spontaneously organized a stage and hotel. This improvisation suited the times: lots of movement, lots of enthusiasm, little planning certainty. I still keep the backpack that I received as a giveaway to this day, not so much because of its material value, but because it reminds me of the Polaris story, which was as down-to-earth as it was turbulent.

Polaris wasn’t a high-end marvel, but it was the GPU that stabilized the mass market. The RX 480 and RX 470 sold millions of units, were used in internet cafes, abused by miners and celebrated by gamers. On the driver side, Polaris was the platform on which AMD tested much of what was later incorporated into Adrenalin and RDNA. And while Vega failed ambitiously and RDNA was still in the pipeline, Polaris literally kept the store running. In many ways, Polaris was the unspectacular but indispensable cornerstone of AMD’s RDNA4, an architecture that integrates machine learning into so many rendering areas for the first time and opens up a clear future for the Radeon brand.

In comparison, Fury was an experiment with enormous risk. The introduction of HBM was bold and technologically intriguing, but the entire board design was expensive, complex and almost impossible to scale for the markets of the time. Fury failed not because of a lack of architectural ideas, but because of a combination of high manufacturing complexity and timing that left little room for maneuver against NVIDIA. Technically, Fury remained one of the most exciting chapters in GPU history; practically, it was a product that struggled to hold its own in the market.

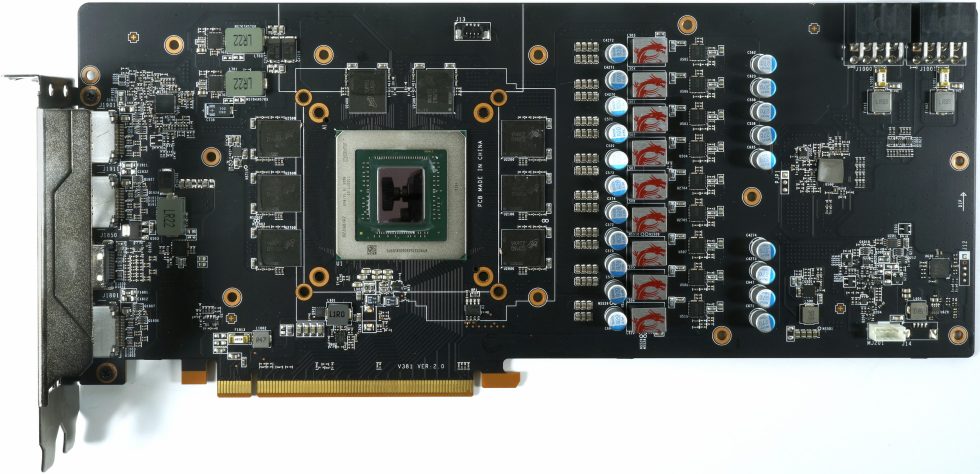

Vega was a similar mix of ambition and reality. Architecturally, Vega was a highly interesting further development of GCN with enormous compute potential, but the architecture was often too cumbersome for games. Scheduling was complex, thermal requirements were high and shader utilization was heavily dependent on very specific workloads. Vega could shine, but only in exceptional cases. It was a GPU that looked excellent on paper and impressed in the lab, but its practical mastery placed high demands on the driver, engine and software.

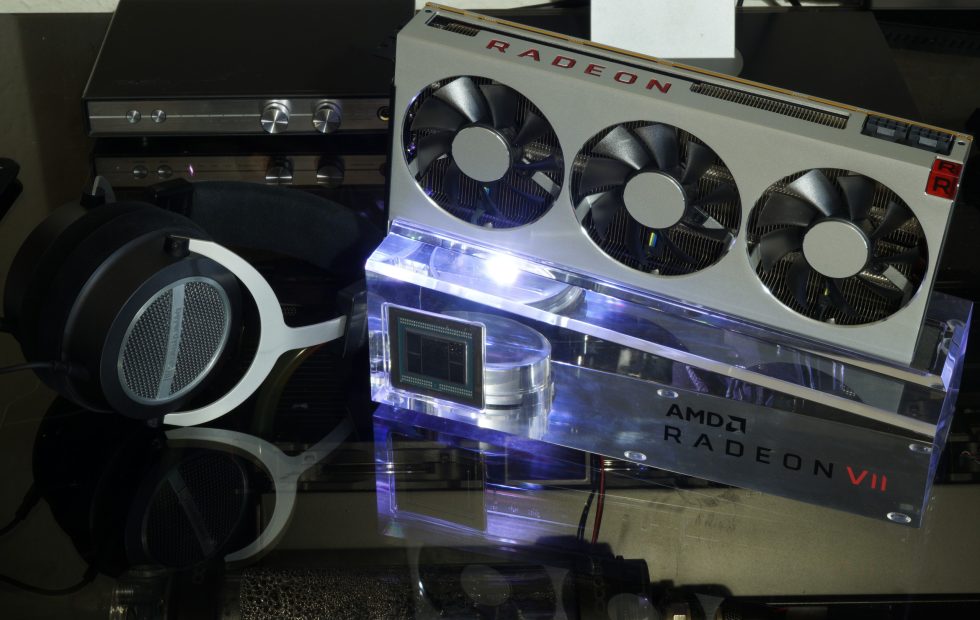

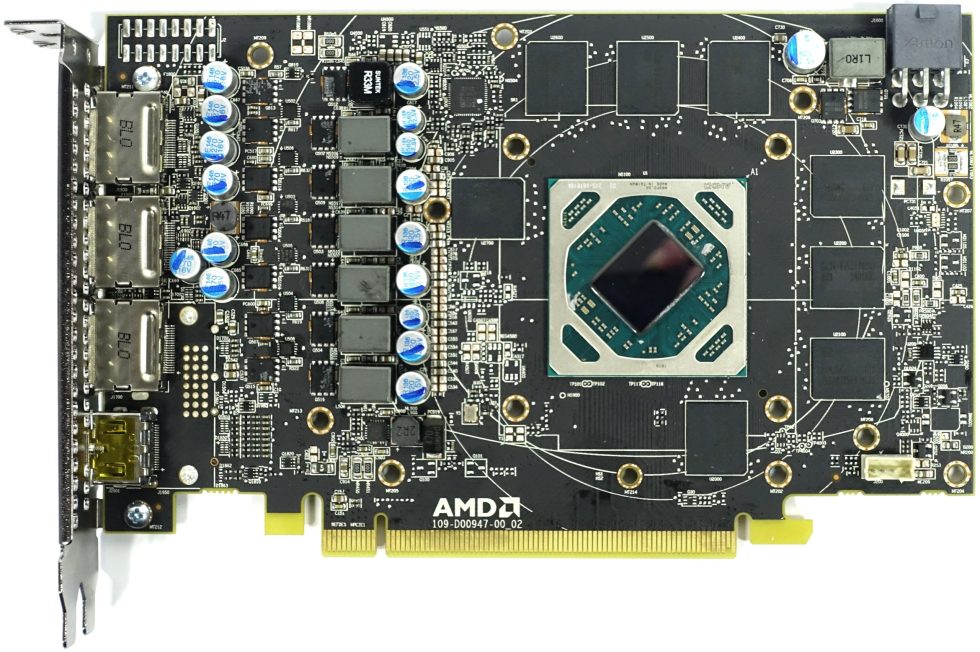

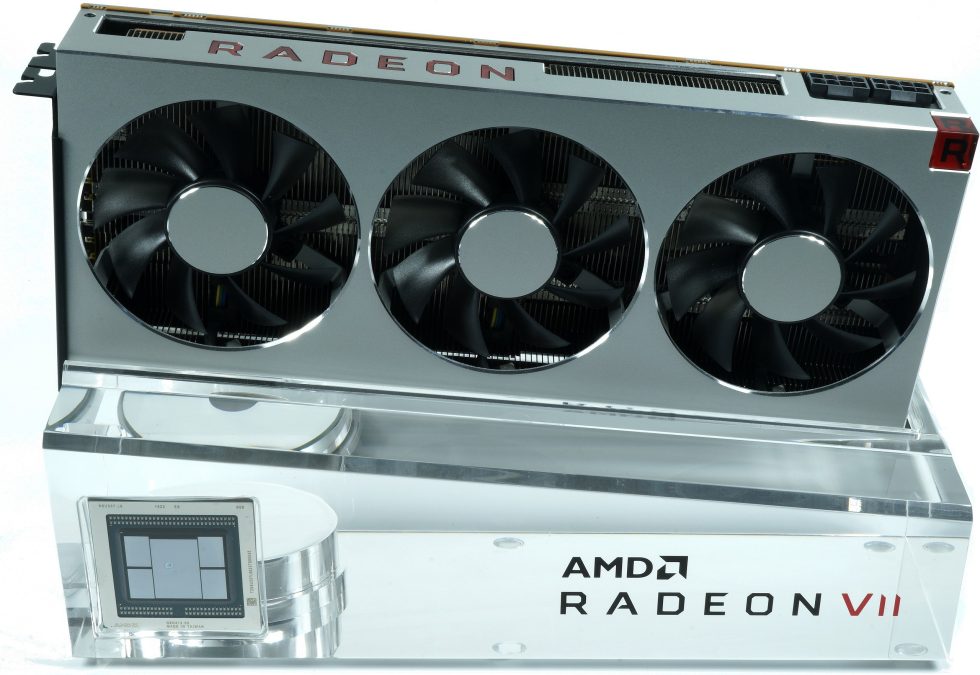

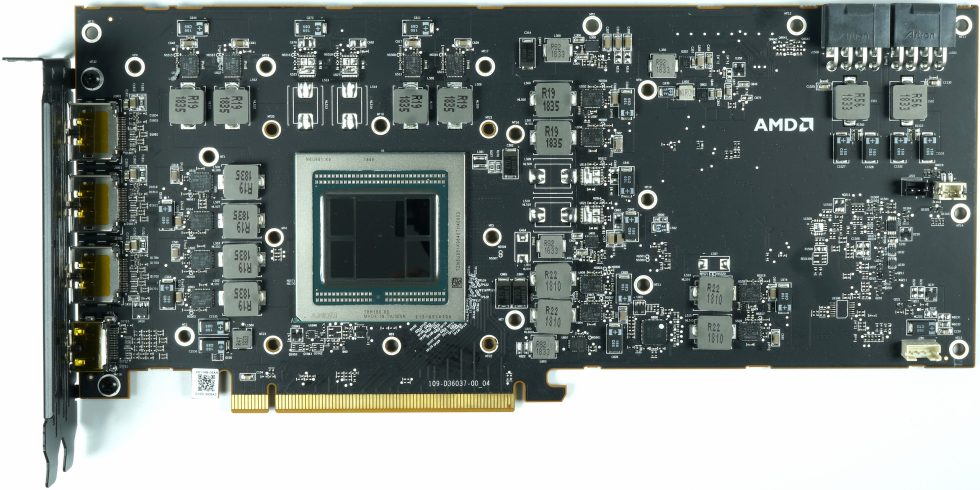

And then there was the Radeon VII – a real unicorn. A card that was never actually intended for the mass market, but was created from production surpluses from the Instinct portfolio. A 7nm chip with massive memory bandwidth, HBM2 and a formal enthusiast claim, which in practice was more of a “showcase” for AMD’s manufacturing progress. The Radeon VII was a technical demonstration, not an official milestone in an existing product family. Its short availability, unusual positioning and idiosyncratic character made it perhaps the most unusual Radeon product of the last 20 years.

All these generations – Polaris, Fury, Vega and the Radeon VII – are symbolic of AMD’s search for a balance between courage and feasibility. Polaris showed that you can win back the trust of users through stability and efficiency. Fury and Vega showed the risks that arise when technical vision runs away from commercial reality. And the Radeon VII demonstrated how closely server, compute and gaming lines are sometimes intertwined. Each of these architectures was a step, an experiment or a necessary intermediate phase on the way to RDNA – and thus to the ML-based future that is becoming a reality today with RDNA4 and the Redstone project. The common thread: Talented architecture, but heavily dependent on software.

RDNA: The real reboot

RDNA was in many ways the much-needed reboot after GCN had reached clear limits in its complexity and compute focus. While GCN was designed to efficiently handle huge, evenly loaded workloads, modern games required an architecture that could react quickly, distribute smaller workload packages more flexibly and clock shaders more efficiently. This is exactly where RDNA came in, and this step was not only evolutionary, but conceptually a break with almost a decade of GPU development.

The most important step was the departure from Wave64 as the basic execution unit. While Wave64 remained possible, RDNA introduced Wave32 as the primary mode – much easier to schedule, more responsive and better suited to typical gaming shaders, which rarely have the perfect parallelism that GCN required. This alone reduced latencies, simplified scheduling and made CU utilization more predictable. On an architectural level, the compute unit was completely restructured. The RDNA CU now consisted of two scalar pipelines, each with its own resources, so that two waves could be processed independently of each other at the same time. The old vector scheduling of GCN gave way to a much more precisely controllable model in which AMD was less dependent on the compiler and engine filling the code perfectly. This allowed the shaders to become more clock-friendly and respond better to the mixed loads that modern games constantly generate.

The cache hierarchy was also a big step forward. RDNA integrated a significantly stronger L1 and introduced a real, architecture-wide L2 refresh, which lowered latencies and improved data proximity to the shaders. Games that previously choked on memory accesses under GCN were suddenly able to work more efficiently because RDNA was not only faster, but also smarter. These cache improvements indirectly paved the way for later ML functions that became relevant in RDNA4.

RDNA 2 introduced the ray tracing accelerators, small dedicated units that handled traversal and intersections in the BVH structure. AMD anchored them directly to the shader front so that they could be used within the wave control. The solution was flexible, but not designed for maximum RT peak performance. Crucially, for the first time AMD treated ray tracing not as an appendage, but as an integral part of the pipeline – a prerequisite for Ray Regeneration as an ML-based denoiser, which was added later in Redstone. The Infinity Cache was also a major innovation. It significantly reduced memory bandwidth requirements, made high resolutions more efficient and compensated for GDDR6 limits. The Infinity Cache was basically a logical counterpart to the cache hierarchies of previous ATI generations – only this time it was designed to be large. It was a reaction to the constant increase in pixel volumes and the need to control energy consumption without throttling the shaders.

RDNA 3 finally dared to break out of the monolithic structure and introduced chiplets. Even if not everything worked smoothly and some expectations in practice fell short of the model calculations, it was a technological step with vision. The logical split between shader die and cache/IO die clearly showed where AMD was heading: greater flexibility, better yield control, lower costs and an architecture that is easier to scale. But the core remains: RDNA was the reboot AMD needed to make Radeon a gaming architecture again. The architecture said goodbye to structural constraints left over from the GCN era, while introducing ideas that are still relevant today. Wave32, new scheduler logic, a modern cache system, ray tracing units, Infinity Cache and chiplet designs all laid the technical foundation for RDNA4.

And RDNA4 in turn opens the door to an era in which classical rasterization and ML inference are on an equal footing for the first time. Every innovation that Redstone makes possible today – FSR4, ray regeneration, ML frame generation or radiance caching – would have been inconceivable without the RDNA restructuring. RDNA is therefore not just a new start, but a foundation for everything AMD has planned in the graphics sector in the coming years.

RDNA4 marks the transition from classic, heuristically controlled rendering processes to real, data-driven models. With FSR4, AMD is relying entirely on neural upscaling models for the first time, which learn from complex patterns in the input signal and enable a more stable reconstruction. The new frame generation no longer calculates intermediate images using rigid motion logic, but uses an ML model that interprets motion sequences and generates more plausible interpolations from them. Ray Regeneration takes a similar approach, replacing the previous heuristic denoiser with a neural model that cleans up noise and incomplete RT samples much more precisely. Finally, Radiance Caching uses machine learning to extrapolate indirect illumination from a small number of rays, thereby massively reducing the computational load. These techniques clearly show that RDNA4 is not just a new architecture, but the beginning of a rendering era in which ML models will become just as important as shaders and caches.

This is no longer the GPU philosophy of the old ATI days. It’s a hybrid render pipeline where traditional shader work and ML inference coexist. The driver becomes the organizer of these models, checks buffers, controls the process, prioritizes resources and decides which ML stage takes effect when. Technically speaking, this is the most profound change since the introduction of programmable shaders. And yet you can still recognize the old ATI spirit in it: courageous, pragmatic, sometimes uncomfortable, but always with one goal – to do things differently and more efficiently than before.

The outlook for 15.00

Today’s NDA release at 3pm is more than just another GPU launch. It’s the moment AMD visibly enters the ML rendering future. Radiance Caching will not take full effect until 2026, but the course has been set. FSR4, Frame Generation and the entire Redstone line show that AMD has completed the learning phase and is now entering an era where ML becomes an integral part of the render path. From the improvised offices in Markham, through the legendary 9800 Pro, the GCN compute monoliths and the RDNA reboots, this path leads to this very moment. A line of development over decades that is expressed not only in transistors, shaders and model architectures, but in a culture that still exists where ATI once began.

15 Antworten

Kommentar

Lade neue Kommentare

Veteran

Urgestein

Mitglied

1

Urgestein

Veteran

Mitglied

Urgestein

Veteran

1

Urgestein

Veteran

Urgestein

Neuling

Mitglied

Alle Kommentare lesen unter igor´sLAB Community →